5/27/23

Assisting you through the process of riding in an Uber

How could AI-driven technologies alert you with time sensitive information about your Uber, and provide updates on your ride while you're in transit?

Background

I've been creating speculative concepts for Humane's recently unveiled AI-powered device for the past few months. In this concept, I've decided to explore how such a device could help a user find their Uber, ensure it's the correct vehicle before entering, and receive relevant updates while they're on the ride.

I chose to explore this flow since I find some of the most compelling concepts to be the ones where the device can display information not just on your hand, but also on different surfaces in front of you. I also think that in this case, more contextual information could benefit the rider and give them confidence that they’re entering the correct vehicle.

There's a stark contrast I've noticed when designing for this AI powered device compared to designing for traditional smartphones. Since the device would be aware of your context at all times, the interfaces become much simpler, displaying far less information and options at any given time. I imagine that this device would anticipate your needs and reduce the amount of input needed in order to accomplish any given task. This is just one of many aspects of this device I'm curious to learn more about as more information is revealed.

Concept Scope

To illustrate how this device could be used to help you in an Uber ride, I decided to explore how three different points of the journey could be displayed on different surfaces. There is additional complexity to this flow that falls outside the scope of this concept, but that I look forward to exploring in the future. This includes crucial aspects like hailing a ride, managing payment methods, and critical safety features. All of these would need to be functional on the Humane device, and I imagine the device could proactively manage some of these things as well.

Designing & Prototyping

I go into detail on how I create these prototypes in my first blog post here. To summarize, I created these concepts using a small, battery-powered projector, which I connect to my computer and design for using Principle and Figma. When I get something I'm happy with, I take it out into the real world and start recording videos.

I've mentioned this in previous blog posts, but I'm excited about how simple each interface becomes when it's presented using a device like this. By displaying information only as it's needed in different parts of the journey, this device could reduce a lot of complexity that we're used to in modern interfaces and make user interfaces much more simple and essential. In each part of this concept, the amount of information I needed to include was small, and this allowed me to focus on presenting it with subtle, smooth animations.

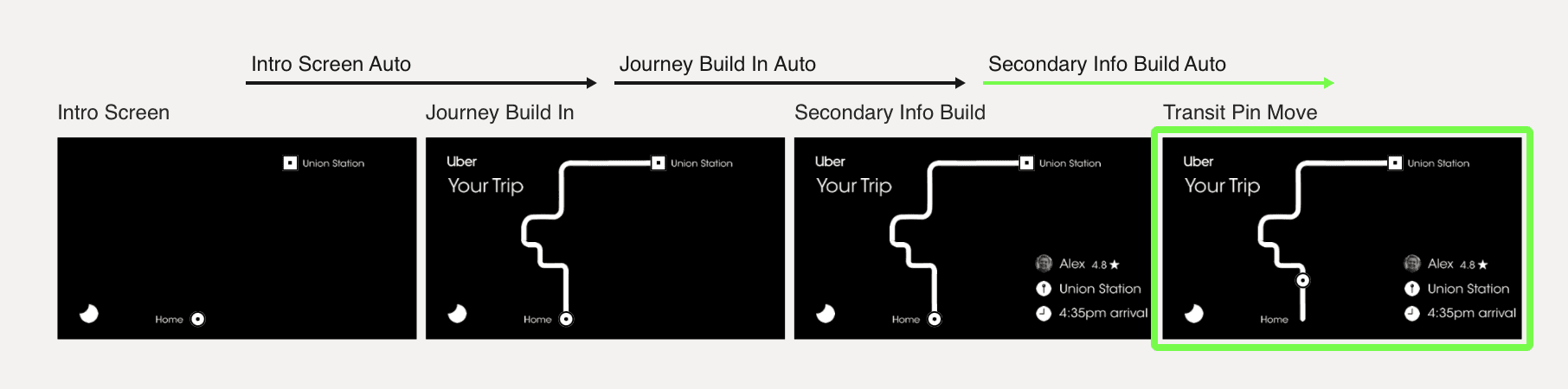

A look at how part of the flow moves in my Principle prototype. I chose to have different aspects of the information build in in stages, to reduce the amount of overwhelming information presented all at once and draw the rider's attention to new pieces of the flow as they're added.

The Uber Flow

As mentioned above, I decided to focus on 3 aspects of this journey to showcase how Humane's device could help you find your Uber. The areas I've chosen to focus on are:

Finding the right car

Entering the car confidently

Receiving updates on the trip during the ride

Finding the right car

In this part of the journey, a user has already called a ride and it's approaching their location. Today, this step requires pulling out your phone to check a location, and find details about the car. I imagine the Humane device could display crucial information about the approaching vehicle on your hand, so you can quickly refer to it as many times as needed prior to getting in the car.

I've also chosen to highlight the license plate in a larger font, so you can reference it as the vehicle approaches and identify the right car. This simpler interface removes unnecessary functions during this time and instead focuses on presenting exactly the right amount of information at this moment.

A short video demonstration of a user referring to information projected on their hand to help them identify the right vehicle.

Entering the right car confidently

While this interface appears to be the simplest, it's actually very powerful. Here, the user has been able to identify the right vehicle using the information provided in the previous step. As they approach the vehicle and prepare to enter, a personalized welcome message is displayed on the vehicle door. Seeing their name would help to ensure they know they're entering the right vehicle.

One important aspect of this, though I didn't show it, is the opposite message. Since the device is able to understand the user's context to help them enter the right car, it could also help them avoid entering the incorrect vehicle. If they mistakenly try and enter a car that isn't their vehicle, the device could display a message telling them not to get in, and guiding them to the right car instead, avoiding an awkward or potentially unsafe situation.

A welcome message displayed on the vehicle helps the rider to confidently know that they're entering the right car.

Receiving updates on the trip during the ride

Finally, when a rider has entered the correct vehicle, they could see information about the trip displayed on the seat in front of them. This could also be helpful for other passengers in the car to stay up to date on the trip.

This information would dynamically adjust based on where they are in the journey, and display up to date arrival times in case of traffic or other unexpected delays. The most interesting part of this compared to the existing version on smartphones in my opinion is the ability to have this information easily be viewed by all passengers in the back seat, without the need to ask to get updates from the person whose account is in use.

Showcasing details of the journey on the back of the seat in front of a rider. This approach would allow all passengers in the back seat to easily get updates on the journey as it happens.

What I learned

I've mentioned it throughout the explanation of this concept, but one of the most important things I'm learning through the exercise of creating these concepts is how simple interfaces could become when only the most critical, relevant information is presented at any given moment. Since this device would be aware of your location and potentially your surroundings, you would have much less to navigate through to find what you need compared to current smartphone UI.

There are other ways this concept could be simplified even further, depending on the capabilities of the device, that I didn't explore in this concept. Instead of being shown a license plate number to find, could the device just display an arrow on your hand that points you in the right direction to find your ride instead? Maybe instead of a welcome message being displayed on the car, the confirmation could be as simple as an audio tone played from the device that lets you know whether you're entering the right vehicle or not. These explorations are just ideas, and there are so many other ways this concept could be executed as well.

Of course, I remain more interested in the device and its capabilities as a result of this exploration, and look forward to continuing to create concepts and imagine what it could be capable of. By reducing the cognitive load on users in even more situations, this device could redefine what it means to accomplish tasks and access information, simplifying it and making it easier and more accessible.

-Michael